Imagine there are no variable names. Imagine working – in 2016 – with registers. Imagine one minute file load times. Imagine that all commands are just numbers. Imagine there’s no usable string processing.

Imagine there are no variable names. Imagine working – in 2016 – with registers. Imagine one minute file load times. Imagine that all commands are just numbers. Imagine there’s no usable string processing.

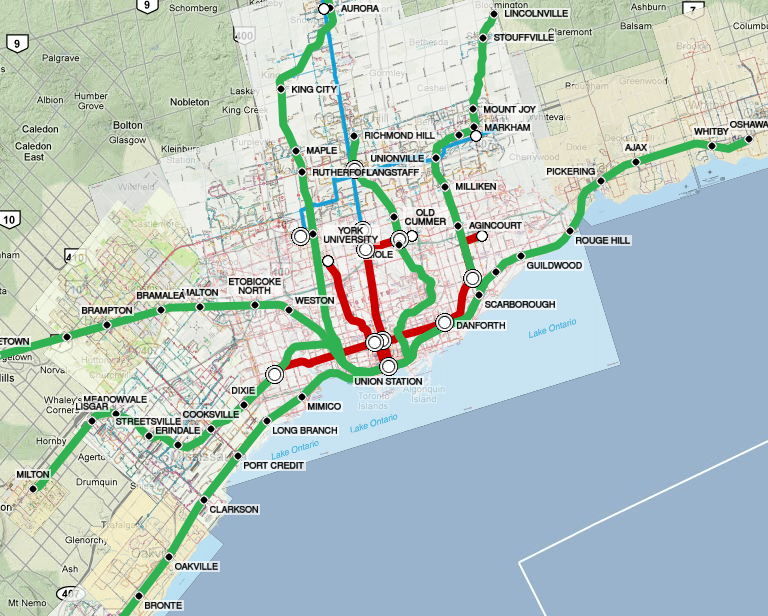

Welcome to Emme 3. During the years that I worked in travel demand forecasting, this was the main tool available to me.

Emme was undoubtedly a trailblazing innovator when it first came out in 1982 and remained a power user’s dream through to the early 90s. But it clearly missed the Windows boat; the software seems to have stagnated until beginning a revival in the late 00s.